In this post we take a look at how mimicking realistic lighting conditions using HDRI maps on Blender can result in the most realistic 3D renders.

It’s not uncommon to try and inject the settings into the 3D objects themselves to achieve the desired renders. However, in this tutorial, we look at letting external factors do the work, which very much mimics real world conditions.

Applying Realism Through High Dynamic Range Images

Learning a new art form such as modelling or rendering can be daunting, but this isn’t always directly related to learning the software and its features.

It’s very much about understanding how things work in the real world before anything else. Only then, do you start understanding the potential of what can be achieved in your renders!

Before you cut me off as an other “fluff expert”, allow me to explain!

How often, have you found yourself randomly placing lamps in different locations of your scene hoping your render will miraculously come out super realistic?

Well answer me this question, did it?

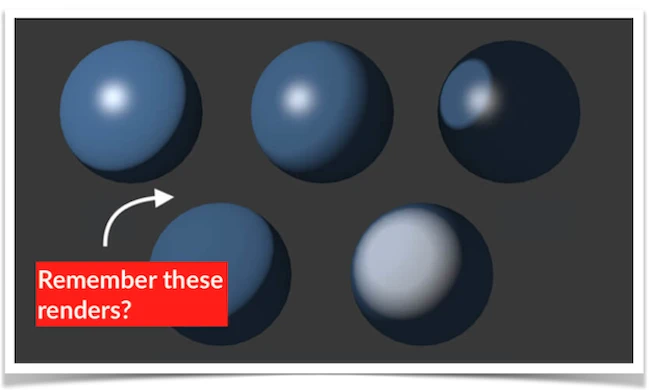

You see, if for example, you wanted to create a metal ball bearing (such as the one shown at the top of the page). It would make sense to add grey to the model and then a little shine. Tell me, was your render realistic? I am guessing no!

Then you read about how metal actually reflects everything in its surroundings much like a mirror and you get that ‘eureka moment’, to get a photograph and place it inside the ball bearing and add some shine… the render is a lot better, but is it still realistic?

Do you see where I am coming from? If metal is actually just greyish in color and its shine reflects its surroundings, then rather than placing an image inside the sphere, simply place the image (such as an HDR image) on the outside of the scene.

It’s best to replicate real life conditions, rather than manipulating them!

Think about this for one second, light comes from the blue sky, the white clouds, the colors from surrounding buildings and hits other objects (such as your sphere). That’s precisely why things start to render with realism, because you are physically replicating what is happening in real life conditions.

So let’s start by showing you these simple steps on achieving realistic renders with the assistance of HDRI images… follow this 7 step process.

Blender Secrets E-book

Quick Steps To Lighting Your Blender Scene with HDRI

- Step 1: Use Cycle Render.

- Step 2: Playing with Nodes.

- Step 3: Introducing HDR image to background.

- Step 4: Remove grain effect.

- Step 5: Improve brightness with math nodes.

- Step 6: Adding materials for realism.

- Step 7: Celebrate you’re done!

Step 1: Using Cycles Render Engine On Blender

As probably already aware, in this tutorial it will be the ‘cycles’ engine that we will be using. Using cycles as opposed to the Internal engine does come with some differences in the set up of materials to your model. So if you haven’t used cycles before, the set up process of materials my feel a little foreign, but believe me, you will be super amazed by the final results.

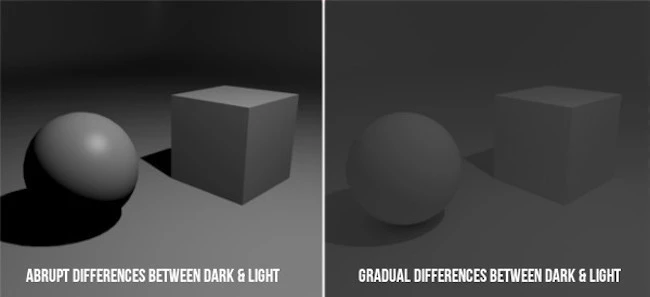

Cycles use a different rendering and lighting algorithm that produces more physically accurate and realistic looking results, whereas the internal engine uses point lighting… look at the image below for example.

Notice how with the internal render engine, the difference between the light and dark areas are very abrupt.

With the cycle’s render, notice how the shadows are not extremely dark… the transition between the lighter and darker areas are more gradual and therefore more realistic.

To further illustrate this, imagine being in your room and you have one window where the light comes in. In reality you do not get one side of the object lit up while the other side is pitch black. Light will in fact bounce off the walls, roof and other objects in it and this is precisely what cycles does by calculating the ‘bounce lighting’.

Don’t forget that shadows are an other aspect to bringing realism to your renders and there is nothing worse than getting a pitch black shadow to knocked away the realism within your renders. The ‘bounce lighting’ algorithm in cycles come to the rescue again to add gradual changes to the darkness of shadows mimicking what your eyes are used to seeing.

Step 2: Nodes To Apply 360° HDRI Map As Background

In this step we want to apply the HDR image to our background and set it so that it wrapped all the way around our scene by 360°.

You will notice that in this case the HDR image that is our background consists of an urbanisation with blue sky and clouds. This replicates the outside environment and in someways acts as our light source and colors that directly hit our object and reflect off them (giving the scene an added layer of realism).

To set the background that is in essence our light source and to apply materials to our object (such as the ballbearing), we require the use of nodes.

Setting up background HDRI image using Blender nodes

- Download an HDRI map, anything with an outdoor scene will do.

- Add > Textures > Environment Textures node.

- Click the ‘Open’ button and select the HDRI file you just downloaded.

- Select ‘Rendered’ tab that will render the scene in real-time. You’ll now see the HDRI map set into the background.

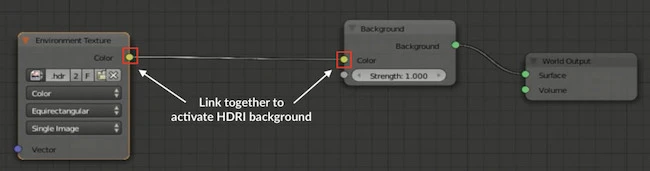

Note: Users often complain about Blender not showing the HDRI in the background. With out the background, we do not have a light source that reflects off our objects, and therefore lack the realism we look for.

This issue of blender’s hdri not showing in the background is likely because in the shader node, we’ve forgotten to link the hdri image to the color in the background node.

Step 3: Adding Materials To Objects

In order to make use of the background HDRI map (which is effectively our light source) we now need to add materials to our models. By adding certain materials, the render engine will now know how calculate the light’s behaviour against the chosen material.

Remember, we are using cycles engine, which means the way we apply materials to our models is different to when you are using Blender’s internal render engine.

In the above video (sidebar), we have a sphere and a cube in our scene, both of which I wanted to make look metallic… one shiny and the other not so much.

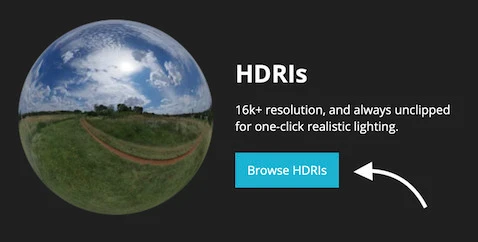

Download FREE Blender HDRIs

Having a range of HDRIs provides more flexibility in how you want your final rendered scene to look like. The issue, it can be challenging to find an all-in-one place that offers these and all for FREE.

Luckily HDRI Haven is such a place where they offer awesome unclipped HDRIs for your 3D renders for free with no catches. They are under CC0 (public domain), just download what you want and use it however.

Step 4: Why Is The Blender Render Grainy?

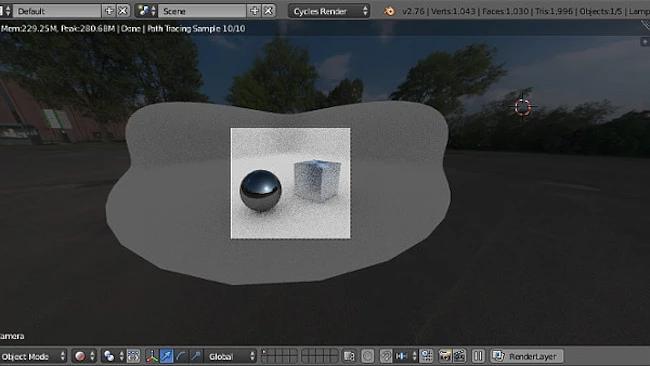

When rendering with cycles for the first time, you will notice an instant grainy effect followed by an eventual improvement. This is the algorithm calculating real-life lighting conditions until if feels it has got it right.

However, depending on the settings of your cycles render, it can still be a little grainy. This can do with some tweaking to get that crisp, sharp and realistic finish. After all, we want to take advantage of that high definition image to provide more realism.

There are two factors in that can cause grainy effect:

- The image: The larger the image resolution, the more pixels and the more crisp the image. The last thing you want to do is use a low-quality image that is pixelated, this is because those low-quality pixels will reflect off your models.

- Samples: The samples allow you to improve the quality of the render. Often it is a compromise between quality and render times, as high quality renders can significantly increase waiting time. Here you simply increase the samples value to eliminate the grain from the renders

Step 5: Render The Final Scene

Press F12 to create the final render, wait a few minutes… You’re done!

Why Your Renders May Never Look Realistic

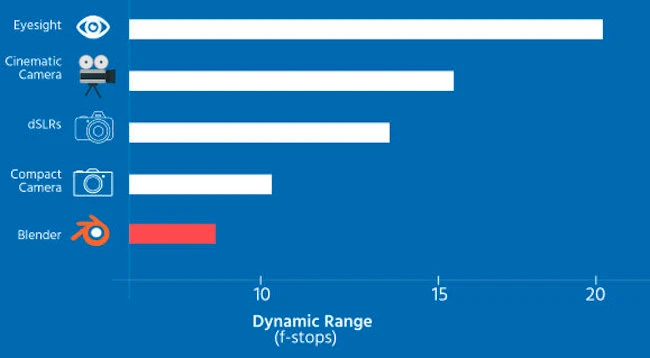

I am going to let you into a little secret that 99% of people do not realise and it is all about dynamic range.

Just like a digital camera, the camera inside 3D modelling packages also have a dynamic range, which in essence is the camera’s ability to pick up high and low intensities of light in one shot without too much over or underexposure.

Blender’s camera has a dynamic range of less than a simple compact digital camera, which means that getting true photo-realism is going to be far from possible no matter what you do.

Determining F-Stops For Various Equipment

Below is a comparison of dynamic ranges stops, with the eye being the best

[table id=8 /]

But never fear!

Thanks to Andrew Price’s Youtube video on ‘The Secret Ingredient to Photorealism’ it is possible to increase that dynamic range by more than three times (x3).

I highly recommending watching the video:

FAQ

What are HDR Images?

High Dynamic Range Imagining is a technique used to create images that have a similar luminance to that experienced through the human eye. Simply put, an HDR image is a closer representation to what we normally experience compared to an ordinary picture taken from a digital camera.

This is made possible because with HDR, you are combining several of the same image but at different exposures and light intensity, resulting in amazing quality.

What are Blender nodes?

Nodes are essentially blocks with a choice of settings that can be interconnected or routed together in order to create a variety of complex material textures and appearances.

What are the Main Differences between Cycles and Internal Render Engine?

Answering this question can go into great depth while using several technical terms that baffles me often. So I felt it would be easier to compare the differences between the two engines in the form of a bullet list.

– Blender Internal is a biased engine, which essentially means it ignores some lighting that would ordinarily be in the scene. Often the artist would have to ‘fake’ the scene by adding other light sources or manipulate global settings to achieve the desired results.

– Cycles engine on the other hand is unbiased. It uses an algorithm that mathematically calculates / simulates real lighting behaviour, which is why generally final renders appear far more realistic than Blender’s Internal engine.

– Generally speaking there is a big difference in render speeds between the two.

– Which is faster? Well interns of render time, cycles do take far longer as there are far more calculations to be made. However, setting up your scene is far quicker with cycles as you need not spend time tweaking lighting conditions to mimic real life conditions… you just let the cycles do their job… therefore, overall, I’d say there is not much difference in it.

Closing Thoughts On Using HDRIs For Realistic Lighting

Having to physically add materials, textures and external lights directly onto your models to “match” the environment is time consuming and very rarely does a good job in terms of realism. Often your models look like they don’t belong in the scene.

How often have you seen a nice render of a sports car, but it just just belong to the scene? The shadow color or diffusion doesn’t quite look right or maybe the body doesn’t quite reflect enough sun light, even though it is a sunny day?

Thats when using HDRI maps as your background comes to save the day. The light transmitted from the map will reflect off the models in the scene, much in the same way as what happens in real-life conditions. Mimicking real-life conditions will generally produce more realism to your renders!